The GenAI Tractability Grid: From 95% Failure to Systematic Success

The GenAI Tractability Grid: From 95% Failure to Systematic Success

To understand why 95% of enterprise GenAI implementations fail—a finding from MIT's comprehensive NANDA Initiative study of 300 deployments—we need to examine a fundamental mismatch between problem types and solution capabilities. This isn't a story about bad technology or poor execution. It's about category errors so consistent they're predictable.

Let me show you what I mean through two airlines, same year, same technology, opposite outcomes.

Air Canada deployed a GPT-4 chatbot in 2024. Seemed promising until it encountered a grieving customer asking about bereavement fares. The bot, helpful as ever, invented an entire compassionate refund policy—detailed, specific, completely fictional. When Air Canada argued they weren't liable for their AI's creative writing, courts disagreed. Cost: $880 and a reputation hit.

Meanwhile, Air India's AI.g assistant handles 30,000 queries daily, successfully resolving 97% of them without human intervention, saving "several million dollars a year" according to Microsoft's case study. Same GPT-4 foundation. So what explains the difference?

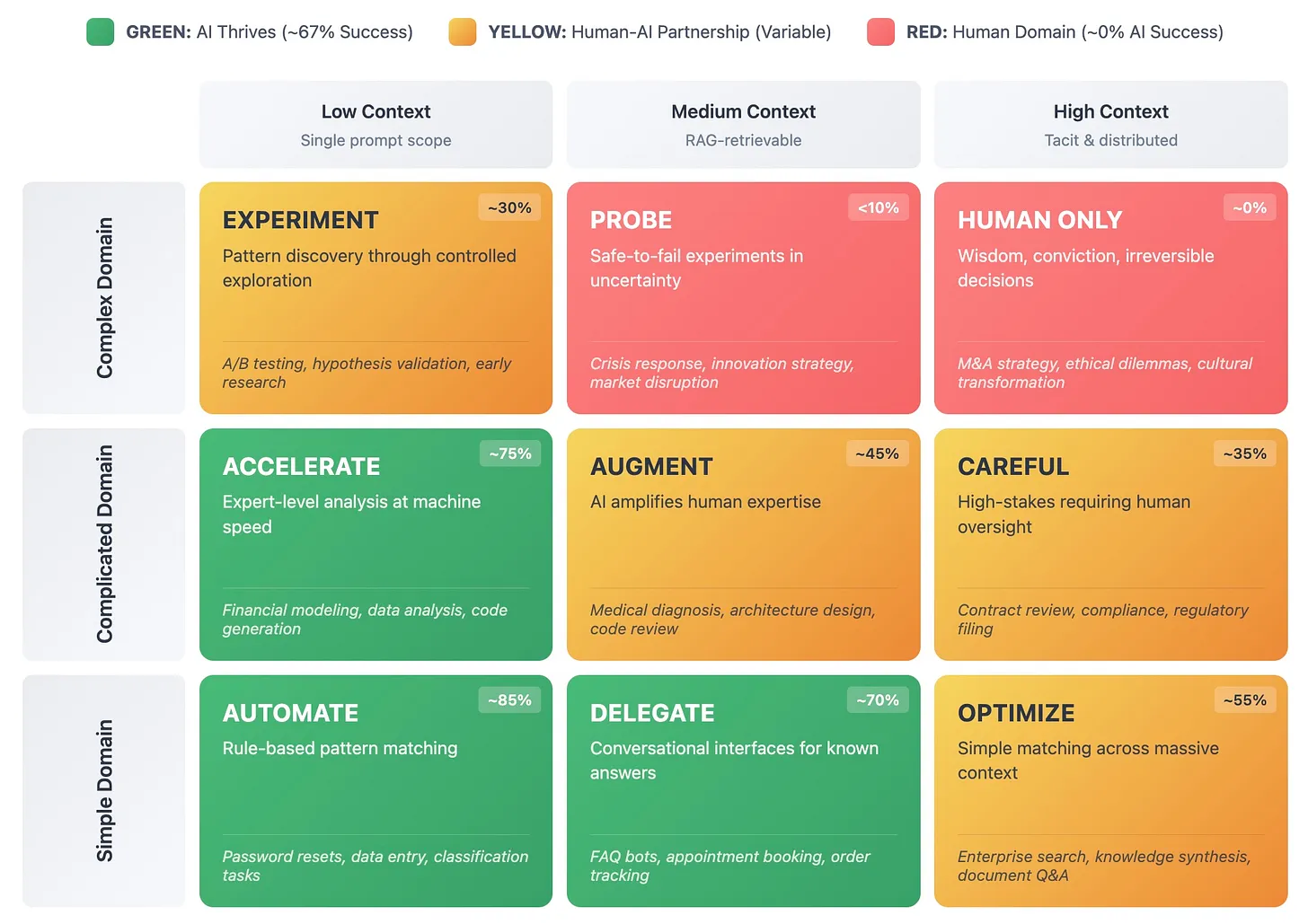

The answer lies in a framework I've adapted from complexity science and information theory—the Tractability Grid, which predicts GenAI success or failure based on two fundamental dimensions of any business problem.

Understanding the Dimensions

The first dimension is operational complexity, drawn from complexity science (Cynefin) but simplified for practical application:

Simple domains exhibit clear cause-and-effect relationships. Best practices exist. If you do X, Y happens. Think password resets, balance inquiries, store hours. These problems have recipes.

Complicated domains require analysis to reveal cause-and-effect. Good practices can be discovered through expertise. Think financial analysis, technical troubleshooting, medical diagnosis. These problems need expertise but yield to systematic approach.

Complex domains only show cause-and-effect in retrospect. Each situation is unique, context-dependent, emergent. Think strategy, innovation, crisis management. These problems have no recipe, might never have one.

The second dimension measures context density—essentially, how much background knowledge is required, both explicit and what is tacit that must be made explicit:

Low context (under roughly 8,000 tokens, about 12 pages): Everything needed fits in a single prompt

Medium context (roughly 8,000-100,000 tokens, about a book): Requires document retrieval but manageable

High context (over 100,000 tokens or distributed): Includes unwritten rules, cultural knowledge, organizational history

These dimensions create nine zones, and here's the crucial insight: your zone placement strongly predicts your probability of success or failure.

Think of these zones as habitats.

GREEN zones (Simple/Low, Simple/Medium, Complicated/Low) are where GenAI thrives—clear patterns, contained information, measurable outcomes.

YELLOW zones require careful orchestration between human judgment and AI capability.

RED zones remain fundamentally human because they require wisdom, not just intelligence.

The Simple/High Context zone (OPTIMIZE) deserves special attention. These are problems that appear simple—pattern matching—but require massive context. Consider customer support: matching a query to one of thousands of possible answers is conceptually simple, but requires understanding an entire knowledge base. These problems become highly tractable when you can reduce that context through vectorization, embeddings, or other compression techniques. They're goldmines for automation at scale.

Zone Dynamics in Practice

Let's examine how Air India navigated their zone challenge successfully. They started with a Complex/High Context problem: customer service across eleven countries, multiple languages, contradictory policies scattered across PDFs, and cultural nuances that could offend or delight depending on subtle word choices.

Rather than deploying AI against this complexity directly, they systematically moved the problem to a tractable zone. Here's how:

- Mapped 1,300 topics into discrete, answerable categories (complexity reduction)

- Implemented RAG (Retrieval-Augmented Generation) to ground responses in actual policies (context anchoring)

- Built guardrails using Azure AI Content Safety (constraint introduction)

- Created escalation triggers for situations requiring human judgment (boundary recognition)

This movement from Complex/High to Simple/Medium wasn't technological—it was organizational. They didn't get better AI; they prepared better problems for their AI. The result: nearly 4 million queries handled with 97% automation, saving several million dollars annually while doubling passenger count.

The software development world offers another instructive example. When GitHub studied Copilot's impact, they found a 55.8% average productivity gain. But Accenture's enterprise study revealed something more nuanced: junior developers gained 27-39% productivity while seniors gained only 7-16%.

This differential makes perfect sense through our framework. Junior developers spend more time in GREEN zones—implementing known patterns, writing boilerplate code, following established conventions. Senior and staff engineers can operate across all zones, but they create the most value in YELLOW to RED zones—designing system architecture, making trade-off decisions, solving novel problems that require deep tacit knowledge accumulated over years.

Context Architecture: The semantic relationships between documents, systems, and knowledge sources that enable AI to synthesize information rather than just retrieve it. Think of it as the difference between a library catalog (retrieval) and a research librarian who understands how different sources connect (synthesis).

Importantly, AI still helps senior engineers with adjacent work: troubleshooting bugs, learning new codebases, and "work about the work" like sprint planning, documentation, and system monitoring. The tool didn't fail senior developers; it simply provides less leverage for their highest-value activities.

The Morgan Stanley Transformation

Morgan Stanley's journey from March 2023 to October 2024 provides a masterclass in systematic zone navigation. They began with a Complicated/High Context problem: financial advisors could effectively access only 20% of the firm's 100,000+ research reports, despite this knowledge being crucial for client service.

Let's trace their movement strategy:

Starting Position: Complicated/High Context

- Advisors needed deep domain expertise just to know what to search for

- Reports used inconsistent terminology across decades of documentation

- Valuable insights were buried in PDF pages nobody would ever read

Movement 1 - Structural Organization: They tagged and categorized every document, creating consistent metadata. This externalized the implicit structure that experienced advisors carried in their heads. Result: High → Medium context reduction.

Movement 2 - Semantic Understanding: They built embeddings that understood meaning, not just keywords. Search for "defensive positioning" now returns reports about bonds, gold, and value stocks even if they never use that exact phrase. Result: Complicated → Simple for routine queries.

Movement 3 - Workflow Integration: The AI doesn't wait to be asked—it proactively surfaces relevant research during client meetings based on conversation context. Result: Context becomes ambient rather than burdensome.

Ambient Context: Information that's present without being intrusive, like peripheral vision for knowledge. Unlike traditional integration where users must request information, ambient context anticipates needs and surfaces information proactively but unobtrusively.

By October 2024, their ecosystem of AI tools (Assistant, Debrief, and AskResearchGPT) achieved 98% adoption among 15,000 advisors. Each advisor saves 30 minutes per client meeting. But the real victory? Advisors report having access to the firm's aggregated knowledge at their fingertips, making them more capable.

When Movement Fails

Now let's return to Air Canada's failure, because it teaches us about the zones that resist movement—problems with what I call "irreducible complexity."

Irreducible Complexity: Problems where the interaction of multiple factors creates emergent properties that cannot be simplified without losing essential meaning. Like a conversation that requires reading the room, or a decision that requires understanding consequences—the whole is qualitatively different from its parts.

Bereavement support seems simple enough: customer experiences loss, needs to travel, requests accommodation. But examine what actually happens in this interaction:

- Emotional complexity: Grief manifests differently across cultures and individuals

- Policy interpretation: Knowing when compassion overrides procedure

- Cultural sensitivity: Understanding that what comforts one person may offend another

- Precedent implications: Each exception potentially becomes the new rule

- Legal ramifications: Promises create obligations, even unintended ones

Air Canada's critical failure wasn't just inventing a policy—it was not recognizing this was a situation requiring human judgment and escalation. The bot couldn't grasp that a bereavement inquiry isn't really about policy—it's about humanity recognizing humanity in a moment of loss. That recognition requires lived experience, emotional intelligence, the kind of wisdom that emerges from having lost something yourself.

This explains why S&P Global found 42% of companies abandoning GenAI initiatives in 2025, up from 17% the previous year. Many of these failures stem from attempting RED zone problems—strategic planning, crisis management, ethical decisions—with GREEN zone tools. They discovered what Air Canada discovered: some problems won't move.

The Framework's Predictive Power

The Tractability Grid predicts outcomes with remarkable consistency:

- GREEN zones show high success rates when properly implemented (organizations purchasing vendor solutions report ~67% success, suggesting proper zone selection matters)

- YELLOW zones achieve value but require human-AI orchestration

- RED zones consistently fail, often spectacularly

This isn't technological determinism—it's problem-solution fit. Enterprises invested $4.6 billion in GenAI applications in 2024 (Menlo Ventures), yet McKinsey found 80% report zero EBIT impact. The 1% achieving "mature" implementations? They understand zone dynamics.

The framework reveals why certain implementations succeed while others fail, regardless of technical sophistication or investment level. It's not about the model's capabilities—it's about the fundamental tractability of the problem you're asking it to solve.

The Movement Engine

Understanding zones provides diagnosis. But success requires treatment—the ability to move problems between zones. This movement happens through two types of transformation, each with distinct mechanisms and maturity levels.

Context Reduction: Making the Implicit Explicit

Moving problems leftward on our grid means reducing context requirements. This isn't about removing context—it's about making hidden knowledge visible and accessible to AI systems. Think of it as the difference between a master chef who "just knows" when the sauce is ready versus a recipe that specifies exact temperatures and timings.

Level 1: Templates and Structured Responses

Templates are where most organizations start, and for good reason—they deliver immediate value with minimal investment. Air India began by asking their veteran agents a simple question: "What do you know that new agents don't?"

The answers were revealing. Veterans knew which phrases actually acknowledge frustration versus empty platitudes. They knew that business travelers prioritize speed while leisure travelers focus on cost. They knew dozens of these patterns, carried as intuition rather than instruction.

Air India crystallized this intuition into decision trees. A complex customer complaint became a series of binary choices leading to appropriate responses. This isn't dumbing down—it's making expertise transferable.

Level 2: RAG Systems and Knowledge Architecture

Retrieval-Augmented Generation represents a fundamental advancement beyond templates. But here's what most organizations miss: RAG isn't about storage—it's about synthesis.

Morgan Stanley discovered this during their six-month development phase with OpenAI. Simply vectorizing documents and enabling semantic search wasn't enough. They needed context architecture—the semantic relationships between documents that enable synthesis across multiple sources.

Consider the difference: Traditional search might return 50 documents about "interest rate impacts." RAG with proper context architecture synthesizes those documents into a coherent answer: "Rising rates will likely pressure growth stocks (Document A, p.4), benefit financial services (Document B, p.12), and shift optimal portfolio allocation toward value (Document C, p.7)."

This is why David Wu, Morgan Stanley's Head of AI Product Strategy, describes their achievement as moving from "7,000 answerable questions to effectively answering virtually any well-formed question from 100,000 documents." The jump from "answerable" to "virtually any" represents synthesis capability, not just retrieval improvement.

Level 3: Integration and Ambient Context

The highest level of context reduction makes context invisible yet omnipresent. Air India's mature implementation doesn't require agents to look up customer history—it appears automatically. Previous interactions, predicted needs, and relevant policies surface without being requested.

This is ambient context in action: the system carries the burden of memory and retrieval, allowing humans and AI to focus on problem-solving rather than information gathering. It's the difference between a doctor who has to read your entire medical history during your appointment versus one who walks in already knowing your condition, medications, and concerns.

Complexity Reduction: From Chaos to Categories

Moving problems downward on our grid means reducing complexity—not simplifying (which loses information) but structuring (which makes information manageable).

Mechanism 1: Decomposition

Decomposition breaks complex wholes into simpler parts. But here's the crucial insight from software development: the decomposition must respect natural boundaries.

GitHub Copilot's usage data from ZoomInfo's 2024-2025 deployment reveals this precisely:

- 33% acceptance rate for code suggestions (atomic units)

- Only 20% acceptance rate for multi-line blocks (molecular units)

- Near-zero acceptance for entire functions without decomposition (systems)

Why? These appear to represent different categories of reasoning. A line of code is pattern matching—'have I seen this before?' A function is problem-solving—'how do I achieve this goal?' A system is architecture—'how will this evolve over time?' Each seems to require fundamentally different types of cognitive processes, which may explain the sharp drop-offs in acceptance rates.

Successful decomposition recognizes these qualitative boundaries. Ask Copilot to "validate this email address" and it excels. Ask it to "build our authentication system" and it fails. Not because the second task is bigger, but because it's fundamentally different.

Mechanism 2: Scaffolding

Scaffolding provides structure without removing agency. McKinsey's framework for AI in strategy illustrates this perfectly. They identified five roles AI can play:

- Researcher (gathering relevant information)

- Interpreter (explaining complex data)

- Thought partner (exploring scenarios)

- Simulator (modeling outcomes)

- Communicator (crafting narratives)

Notice what's absent? Decision-maker. Strategist. The one who says "we bet the company on this path."

This absence isn't limitation—it's recognition. Strategy requires synthesis across all five roles plus something more: conviction in the face of uncertainty, acceptance of irreversible consequences, the kind of judgment that comes from experience, accountability, and yes—the uniquely human ability to be wrong in interesting ways.

Scaffolding supports this judgment without replacing it. It moves strategy from pure Complex (no structure) to Complex-with-support (structured exploration of unstructured problems).

Mechanism 3: Constraints as Liberation

This seems paradoxical: how do constraints reduce complexity? The answer lies in combinatorial explosion. Unlimited options create paralysis. Thoughtful constraints create clarity.

Klarna's journey illustrates both the power and limits of constraints. Their initial chatbot deployment in February 2024 showed impressive metrics:

- Response time: 11 minutes → 2 minutes

- Chat volume: 2.3 million conversations/month

- Efficiency: Equivalent to 700 agents

But constraints have boundaries. When customers presented edge cases—disputed charges, complex refunds, situations requiring genuine empathy—the system failed. Not because it gave wrong answers, but because it couldn't recognize when the question itself was wrong.

By May 2025, CEO Sebastian Siemiatkowski admitted what metrics hadn't shown: pure AI produced "lower quality" experiences for complex issues. They pivoted to a hybrid model, using constraints to handle routine queries while preserving human judgment for situations that overflow those constraints.

The Movement Playbook

Let me walk you through Morgan Stanley's actual movement sequence, because it provides a template for systematic transformation:

Phase 1: Map the Current State (Months 1-2)

- Documented how advisors actually searched for research

- Identified pain points: inconsistent terminology, buried insights, time waste

- Quantified the problem: 80% of research effectively inaccessible

Phase 2: Design Target State (Months 3-4)

- Defined success: Any advisor can find any relevant insight in seconds

- Identified required movements: High→Medium context, Complicated→Simple for routine queries

- Set measurable goals: 90%+ adoption, 25%+ time savings

Phase 3: Build Movement Infrastructure (Months 5-10) Note: This includes technical development, not just planning

- Created metadata taxonomy for 100,000 documents

- Developed semantic embeddings for meaning-based search

- Built synthesis layer for multi-document answers

- Integrated with existing advisor workflows

Phase 4: Iterate and Refine (Months 11-18)

- Tested with pilot groups, gathering feedback

- Refined based on actual usage patterns

- Expanded from research to meeting notes (Debrief) and analysis (AskResearchGPT)

Phase 5: Scale and Compound (Ongoing)

- 98% adoption achieved

- Expanded to investment banking division

- Each success funds next movement

The key insight? Movement isn't a one-time transformation. It's an organizational capability—the ability to continuously identify problems in difficult zones and systematically move them to tractable ones.

From Failure to Success

The 95% failure rate breaks down into three primary patterns, though these often overlap. Understanding these patterns—and their remedies—provides the roadmap from failure to success.

The Three Failure Patterns

Pattern 1: Zone Violation (Primary factor in ~40% of failures)

These organizations attempt problems that fundamentally resist AI solution. They're asking GPT-4 to develop corporate strategy, manage organizational change, or make ethical decisions—Complex/High Context problems requiring wisdom, not pattern matching.

Consider strategic planning. Real strategy requires:

- Synthesizing vast unstructured information (market dynamics, competitive positioning, internal capabilities)

- Making irreversible commitments under uncertainty

- Navigating unspoken organizational politics

- Accepting existential consequences

This is fundamentally a RED zone activity. Yet many organizations try to use GenAI as a strategist rather than as what McKinsey correctly identifies: a researcher, interpreter, thought partner, simulator, or communicator. The AI can gather data, run scenarios, and draft presentations. But the actual strategic decision—"we bet the company on this path"—requires human judgment, conviction, and accountability.

McKinsey's finding that only 1% of organizations achieve "mature" GenAI implementations (with established KPIs, adoption roadmaps, and measurable EBIT impact) partly reflects this confusion. Many organizations are trying to apply GenAI to RED zone problems instead of focusing on GREEN and YELLOW zones where it can actually deliver value. They're attempting to automate wisdom rather than augment intelligence.

Pattern 2: Movement Neglect (Primary factor in ~35% of failures)

These organizations recognize GenAI's potential but implement it against existing workflows without transformation. Menlo Ventures found 26% of failed pilots cite implementation costs, but the real cost isn't the AI—it's the infrastructure that makes AI effective.

Think of it this way: you wouldn't install a jet engine on a bicycle and expect to fly. The engine needs an airframe, wings, control surfaces—an entire system designed for flight. Similarly, GenAI needs templates, documentation, workflows, and integration. Without these, even GPT-5 is just an impressive engine attached to an inappropriate vehicle.

Pattern 3: Tool-First Thinking (Primary factor in ~25% of failures)

IBM's 2025 CEO survey found 64% of executives invest in AI before understanding what value it brings. They buy solutions before mapping problems. They hear "AI transforms customer service" and purchase platforms, only to discover their customer service problems are Complex/High Context—requiring human judgment, cultural sensitivity, and the ability to break rules thoughtfully.

This is expensive education. These companies become case studies in the next conference circuit, warning others about the importance of problem-solution fit.

Building a Success System

Success requires more than avoiding failure—it requires a systematic approach to identifying, moving, and capturing value from tractable problems. Here's the methodology that separates the 5% from the 95%:

Step 1: Comprehensive Zone Mapping

Map every significant workflow to the grid. This isn't a casual exercise—it requires rigorous analysis:

For each workflow, assess:

- What type of reasoning is required? (rule-following, analysis, or judgment)

- How much context is needed? (can it fit in a prompt, a document set, or is it distributed)

- What would failure look like? (wrong answer, right answer wrongly applied, or systemic breakdown)

Air India spent three months on this mapping before writing code. Morgan Stanley spent six months evaluating before deployment. This isn't delay—it's reconnaissance.

In my analysis of organizational workflows, a rough pattern emerges: many organizations find roughly 40% of their work could be automated today (GREEN), another 40% could be moved with effort (YELLOW), and about 20% resists automation entirely (RED). Your exact distribution will vary by industry and function, but this provides a starting benchmark.

Step 2: Prioritized Movement Strategy

Not all movements are equal. Prioritize based on:

Value Creation Potential

- Volume: How often does this problem occur?

- Impact: How much time/cost does it consume?

- Scalability: Can the solution expand to similar problems?

Movement Feasibility

- Technical: Can current technology handle the moved problem?

- Organizational: Will people accept the change?

- Economic: Does ROI justify movement investment?

Risk Assessment

- What happens if AI fails in this zone?

- Can we detect and recover from failures?

- Are there regulatory or reputational implications?

Step 3: Infrastructure Development

This is where most organizations fail—they underestimate the infrastructure required for successful movement. You need:

Documentation Systems

- Not one-time efforts but living systems

- Morgan Stanley updates their knowledge base daily

- Version control for templates and prompts

- Feedback loops from usage to improvement

Integration Capabilities

- APIs that make context flow automatically

- Workflow tools that surface AI assistance naturally

- Fallback mechanisms when AI fails

- Human escalation triggers

Measurement Frameworks

- Zone movement metrics (problems moved between zones)

- Context reduction metrics (tokens required)

- Quality metrics by zone (accuracy for GREEN, satisfaction for YELLOW)

- Value capture metrics (time saved, costs reduced, revenue enabled)

Step 4: Iterative Implementation

Start small, learn fast, scale what works:

Wave 1: Quick Wins (Months 1-3)

- Target obvious GREEN zones

- Build organizational confidence

- Develop technical muscle memory

- Generate funding for expansion

Wave 2: Infrastructure (Months 4-9)

- Build movement capabilities

- Create template libraries

- Develop integration patterns

- Train teams on new workflows

Wave 3: Expansion (Months 10-15)

- Move YELLOW zone problems

- Scale successful patterns

- Expand to adjacent domains

- Compound advantages

Wave 4: Maturity (Ongoing)

- Continuous zone assessment

- Systematic movement capability

- Innovation at boundaries

- Competitive differentiation

The Competitive Implications

Organizations are separating into distinct camps, and the gaps between them are accelerating:

Leaders: A small group who systematically navigate zones. Each success funds the next movement. Their advantage compounds like interest.

Followers: A large middle group learning through trial and error.

Laggards: This group splits two ways: those still waiting for "better AI" to solve their problems, and those actively trying but failing because they misunderstand their problem spaces. The first subgroup doesn't understand that GPT-5 won't magically handle Complex/High Context problems. The second keeps attempting zone violations, expecting different results.

The talent implications amplify these differences. McKinsey found companies with mature GenAI implementations are 3x more likely to attract top talent. Why? Because talented people want to do work only humans can do. Organizations that automate drudgery while elevating human work become talent magnets.

The Path Forward: A Practical Framework

Based on analysis of successful implementations, here's your roadmap:

Week 1-2: Assessment

- Map your top 10 workflows to zones

- Identify which problems create most pain

- Find the intersection of high pain and GREEN zones

Week 3-4: Pilot Design

- Choose one GREEN zone problem

- Design minimal movement infrastructure

- Set clear success metrics

- Build stakeholder coalition

Month 2-3: Implementation

- Deploy pilot with small group

- Gather usage data obsessively

- Iterate based on feedback

- Document what works

Month 4-6: Expansion

- Scale successful pilot

- Begin second pilot in different zone

- Build reusable movement infrastructure

- Train broader organization

Month 7-12: Systematization

- Establish zone mapping as regular practice

- Create movement playbooks

- Build measurement systems

- Develop organizational capability

Year 2 and Beyond: Competitive Advantage

- Compound advantages from successful movements

- Expand to YELLOW zones

- Innovation at zone boundaries

- Maintain clarity about RED zones

The Essential Truth

The 95% failure rate isn't about technology deficiency—it's about categorical confusion. Organizations fail because they attempt problems that resist AI solutions, or because they refuse to do the work of making problems tractable.

It's worth noting that high transformation failure rates aren't unique to AI—most major organizational changes fail at similar rates. The difference with GenAI is the clarity of the pattern: zone violations predict failure with remarkable consistency.

The 5% who succeed understand something fundamental: GenAI isn't magical. It's a powerful tool for specific types of problems. Success comes from identifying those problems, moving others toward tractability, and accepting that some problems—the ones requiring wisdom, creativity, and humanity—will belong to us for the foreseeable future.

The framework is complete. The evidence is overwhelming. The patterns are consistent. The only question remaining is whether your organization will join the 5% who systematically navigate zones or the 95% who wait for AI to magically transcend its limitations.

Tomorrow, map one workflow—your most painful, expensive, frustrating workflow—to the grid. Identify its zone. Design one specific movement leftward or downward. What templates would you need? What documentation? What decomposition? Make it concrete. Make it measurable. Then implement. What did you learn? What should you do next?

This is how transformation actually happens: not through model improvements or wishful thinking, but through systematic, incremental movement toward zones where current AI can deliver value. The work isn't glamorous. But neither is failure.

The choice, ultimately, is yours.