100x People and Organizations in the Age of AI

How to kick so much ass in the age of AI that it echoes through the galaxy

I've been playing Dungeons & Dragons for thirty-five years. In D&D there are two kinds of characters: player characters, who drive the story, and non-player characters, who populate the background. PCs make choices, take risks, bear consequences. NPCs react. They serve a function. They wait for someone else's plot to give them purpose. I'll note the groans in the readers out there right now, it has become obnoxiously trendy to label a person as a PC or NPC, but the frame has merit, and we are all actively choosing which one we are right now. And that choice is becoming the defining question of the AI era.

Some people are using AI to become dramatically more capable. They're shipping projects that would have taken teams, moving into domains they couldn't have accessed before, compressing months of work into weeks. Others are using the same tools to do roughly what they did before, just a little faster. Same tasks, same scope, same dependence on others to frame the problem and verify the outcome.

The difference isn't technical skill. It isn't prompt engineering. It isn't access to better models. Those are necessary but not sufficient to explain this gap.

The difference is something we've recognized for decades, now amplified beyond recognition. The mindset, the domain knowledge, the hunger to own a problem end-to-end: these were always the differentiators. But in 2026, people who combine those traits with AI fluency are shipping work that used to require entire teams. Look at what individual developers are producing right now. Single people building what previously needed a massive team, department or a whole company.

Really look at this, these are github stats from Jeff Emanuel aka Dicklesworthstone on Github or @doodlestein on Twitter.

You might not understand fully what these mean, but as a 20+ plus engineering leader this is a level of output that is unprecedented, and the trajectory for 2026 is frankly and starkly, mind bendingly world shaking in its implications. The gap between what Jeff and people like him and everyone else isn't 2x anymore. It's 10x and appears to be headed to 100x. But what makes the difference?

The common thread: the capacity, drive, hunger, and skills to take a problem from messy ambiguity to outcome without waiting for permission, scaffolding, or validation. And to do so at ever growing scope and scale. I call this LCR, and the AI era is amplifying this ability.

I've been thinking about this because I see it every week for years now. I run AI transformation at BetterUp, and the pattern is unmistakable: give the same tools to two people with similar backgrounds, and one of them will build something that changes how the team works while the other produces a slightly faster version of last quarter's deliverable. We call it having an AI Pilot mindset, which means having high agency, high optimism, cognitive agility, and a hunger to play the game on a whole different level.

There's a framework that's been floating around Silicon Valley for a decade that attempts to capture this divide, and I've been spending time with why it resonates, where it falls short, and why the underlying idea matters enormously right now.

The framework sounds clarifying, but upon consideration I think it actually obscures more than it reveals. Keith Rabois, in his 2014 Stanford lecture "How to Operate," introduced the distinction between "barrels" and "ammunition." The idea: most people in an organization are ammunition. They can do good work, but only when loaded into a barrel and aimed at a target. Barrels are the rare ones who can take a vague goal and run with it autonomously, expanding scope until they hit their limit and break.

The metaphor is sticky. It flatters the people who hear it (everyone imagines themselves a barrel, right?). It gives executives a shorthand for talent evaluation. And it contains a kernel of truth: some people can own a problem end-to-end, and some can't. But the metaphor also has problems — like all maps, it describes the terrain imperfectly.

Here's a hypothetical that shows why. Two product managers at the same company both get access to the same AI tools, they receive the same training, experience the same encouragement, the same incentives, and work in the same system. One of them uses AI to automate her weekly status reports, summarize her emails, prepare for meetings, etc. Saves her hours a week. The other also does those things, but also looks at the customer churn data his team has been struggling with for months, uses AI to build a retention analysis, identifies a pattern nobody had noticed, proposes a fix to their team using a vibe coded demo, and ships a prototype intervention within two weeks. Same tools. Same title. Same tenure. Completely different outcomes.

Rabois would say the second PM is a "barrel" and the first is "ammunition." But that tells you nothing about why, offers no developmental path, and ignores that the first PM might operate like a barrel in a different context.

The Limits of the Gun Metaphor

First, it's binary. You're either a barrel or you're not. And that's how many leaders see their staff, despite the obvious reality that human capability is massively context and problem-dependent. This is my primary complaint with the model. It's a sorting mechanism, not a framework for measuring, fostering, and growing people. It discovers ceilings; it doesn't raise them. The manager expands a persons scope; the employee either handles it or doesn't. There's no theory of why some people can handle more, and no framework for developing the ones who can't yet.

Second, it's acontextual. Rabois himself noted that "a barrel at one company may not be a barrel at another company." The qualities that make someone effective at owning problems end-to-end are partly environmental. A brilliant operator at a 50-person startup might flounder at a 5,000-person enterprise. Not because their capability changed, but because the terrain did. The metaphor flattens this into a binary trait and misses the contextual factors that enable or disable people from acting agentically.

Third, it's passive. Ammunition doesn't choose where it's aimed. The framing subtly suggests that most people are resources to be deployed rather than agents who shape their own contribution. This may describe how some executives think about talent, but it's a poor model for how capability actually develops. It misses the clear fact that everyone is a barrel at some level of problem size, operating domain, and time horizon.

So here's where I've landed after sitting with this for a while: we need a frame that helps people grow, not just one that sorts them.

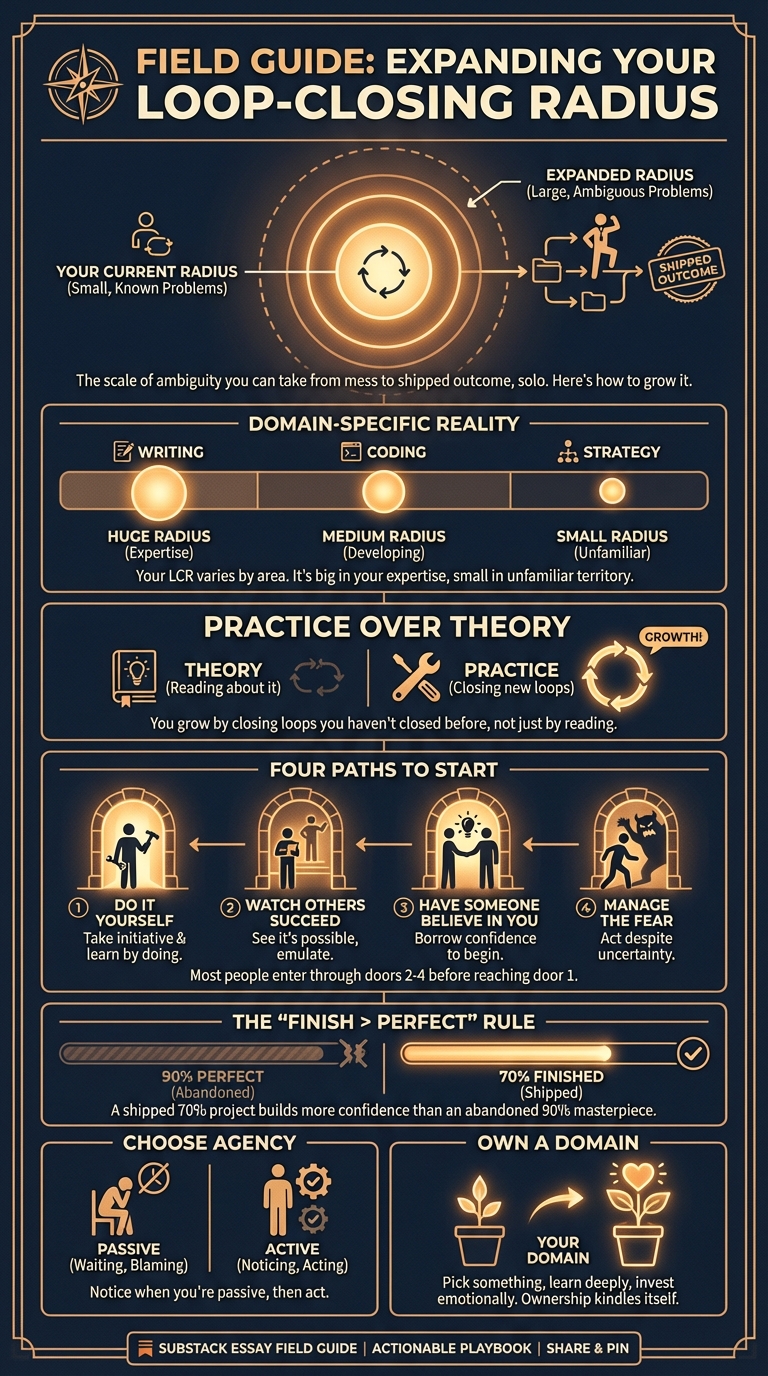

Loop-Closing Radius (LCR)

Here's a different way to think about it: loop-closing radius.

The question isn't "are you a barrel or ammunition?" It's: How large a loop can you close without external scaffolding?

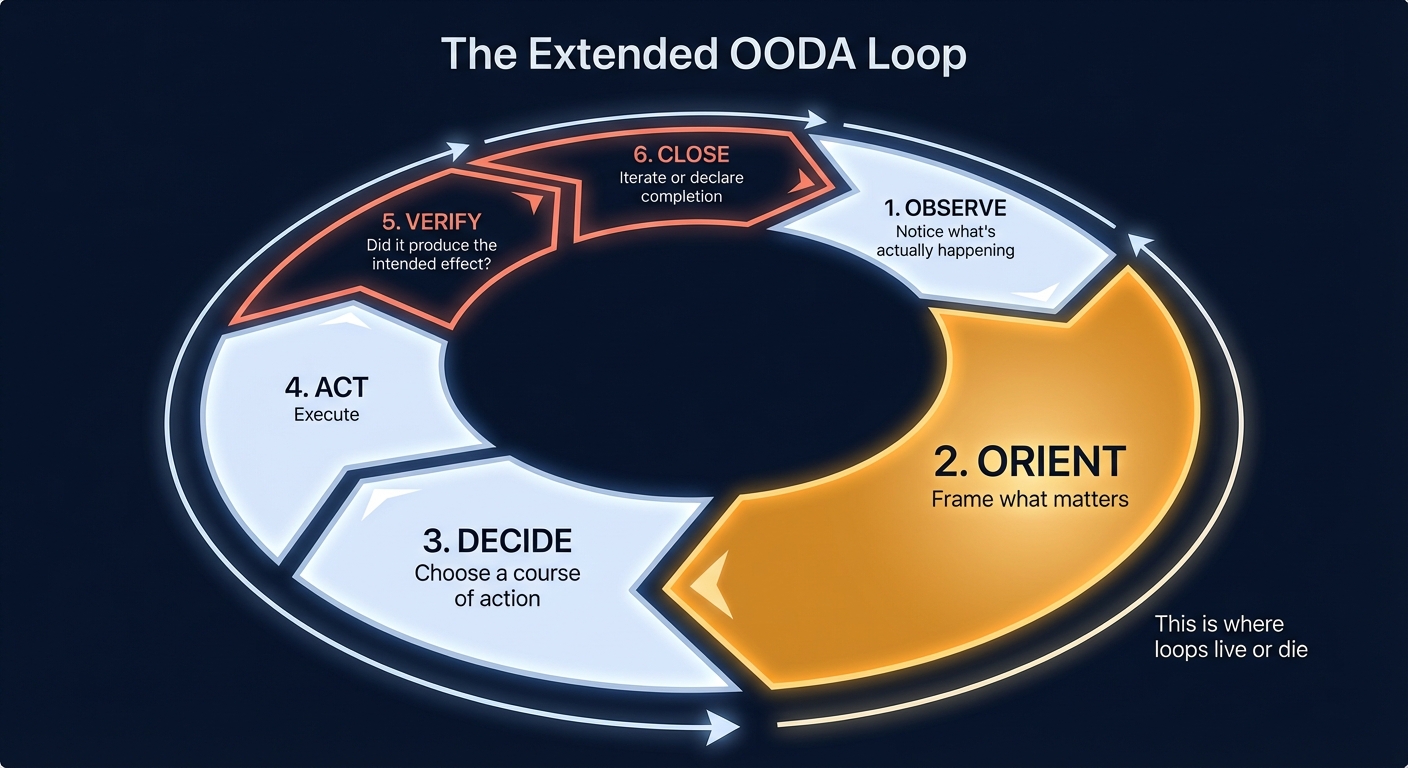

A loop, in this frame, is a complete cycle from problem identification through solution delivery. "Without external scaffolding" means without the managers, processes, approvals, and institutional supports that guide you through steps you can't yet navigate alone. If you're familiar with John Boyd's OODA loop, a decision-making framework originally developed for military strategy, you'll recognize the bones here. But I've extended it to include verification and closure, which is where most loops actually fail.

The full cycle:

These steps aren't equal. Orientation, the act of framing what actually matters, is disproportionately where loops live or die. It is where people most struggle, and where organizations create friction and bottlenecks that often kill a persons ability to grow their LCR ability.

Most people can close small loops independently. Given a well-defined task with clear success criteria, they can execute and verify. Others with more experience and drive can close larger loops. Given a vague complex problem and broad authority, they can frame it, solve it, and ship it. The difference isn't binary. It's a radius across problem spaces with varying complexity, scope, and context.

This maps onto the GenAI Tractability Grid I wrote about earlier. AI agents face the same radius constraints. The complexity and context density of a problem determines how much of the loop AI can close alone, and how much still requires a human with the judgment to navigate ambiguity.

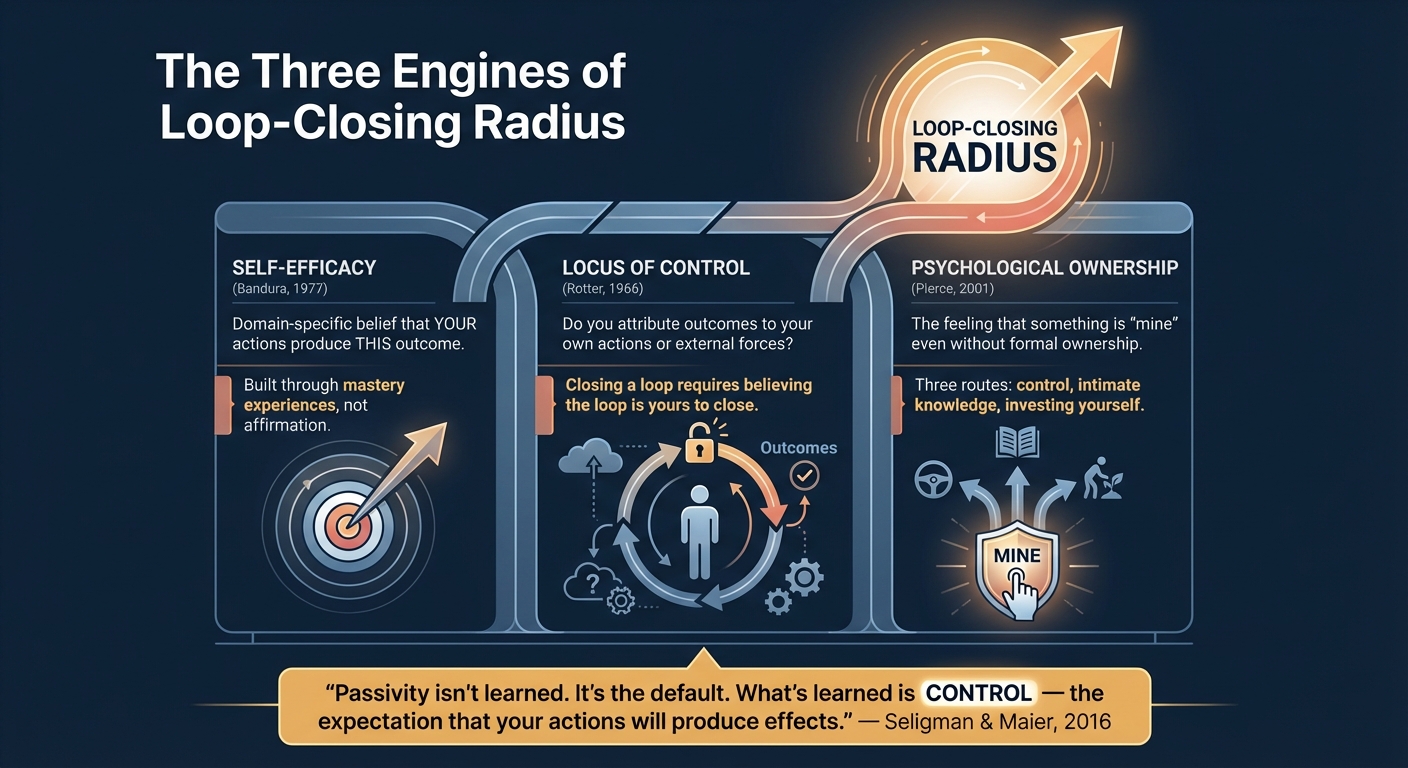

Why can some people close larger loops than others? I think the research actually gives us a clear answer here, there are three interlocking factors.

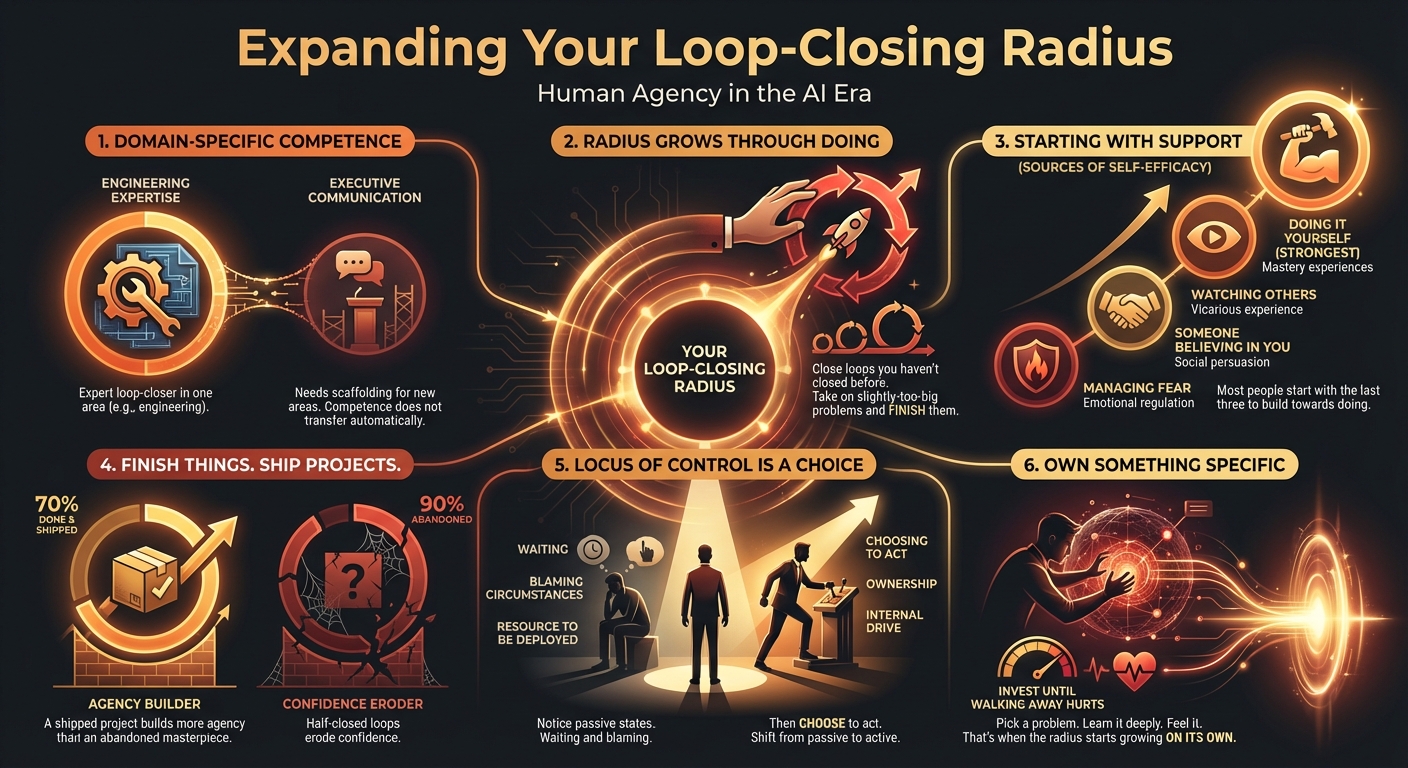

The first is what Albert Bandura called self-efficacy: your belief that your actions can produce desired effects in a specific domain. Not general confidence. Domain-specific conviction that you can cause this outcome. High self-efficacy means you'll attempt harder problems and persist through setbacks. Low self-efficacy means you'll interpret difficulty as evidence of inadequacy and give up earlier. Self-efficacy is built through mastery experiences, not through affirmation. You develop it by doing things and watching them work. This is why loop-closing radius expands through practice, not through pep talks or shallow training.

The second is locus of control, from Julian Rotter's work. Do you attribute outcomes to your own actions or to external forces? Closing a loop requires believing the loop is yours to close. If you're waiting for permission or for someone else to clear the path, you've ceded the decision about whether to act. Your effective radius shrinks regardless of your skill. Allowing others to determine your locus of control in the AI era is slow death of your career and livelihood.

The third is psychological ownership, from Jon Pierce's organizational research: the feeling that something is "mine" even without formal ownership. Pierce identified three routes to it: exercising control over something, developing intimate knowledge of how it works, and investing yourself in its development. When you psychologically own a domain, you take responsibility without being assigned it. You think about it when you're not required to. This is the "barrel" quality Rabois describes, but it's not an innate trait. It's what happens when someone has spent enough time with a problem to care about it — when they've developed the intimate knowledge and exercised enough control that walking away would feel like abandoning something that's theirs.

Saint-Exupéry understood this: "If you want to build a ship, don't drum up people to collect wood and don't assign them tasks and work, but rather teach them to long for the endless immensity of the sea." Ownership isn't assigned. It's kindled.

All three of these are developmental. They can be built, and they can also be suppressed by environments that punish initiative, withhold autonomy, or concentrate decision-making authority.

There's a deeper finding that ties this together. Martin Seligman and Steven Maier originally proposed that organisms learn helplessness from uncontrollable negative events. But in 2016, they revisited their own theory with new neuroscience and found they had it backwards: the brain's default state is to assume control is not present. Passivity isn't learned. It's the baseline. What's learned is control, the expectation that your actions will produce effects. Each completed loop deposits evidence that action matters, that the world responds to your choices. Agency is built one closed loop at a time.

Our job as leaders and individuals is to deliberately create the conditions that move people from passive helplessness to proactive agency.

This is what makes loop-closing radius different from "barrels vs. ammunition." It's developmental, not fixed.

It's domain-specific: a 20-year engineering leader might close enormous technical loops while still needing scaffolding for executive communication. And most importantly, it puts the locus of development inside the person. The question shifts from "what kind of resource are you?" to "what can you own?" Nobody else decides your radius. You expand it yourself.

What AI Changes

Now add AI to the picture.

The tools are getting absurdly powerful: by some estimates, AI effective compute is compounding at roughly 50x per year through combined hardware, investment, and algorithmic gains. Tasks that required teams now require individuals. Projects that took months now take days. The marginal cost of execution is collapsing toward zero.

In this environment, the binding constraint shifts. It's no longer "who can execute?" It's "who can close the loop?"

AI can do enormous amounts of work. It can draft, analyze, code, and generate options. What it cannot do is own the consequences of being wrong. AI can simulate orientation. It can propose framings, suggest priorities, generate strategies. But it cannot bear the professional, reputational, or moral weight of choosing wrong. That's where human leverage lives and AI can't follow: not capability, but accountability. Someone has to decide that this is the priority, maintain coherent intent through a complex execution, verify that the outcome solves the real problem, and put their name on it. Those are acts of agency that require a person who believes the loop is theirs to close.

The people who will thrive in the AI era are not the ones with the most technical skill or the deepest domain expertise. They're the ones with the widest loop-closing radius. The ones who can take an ambiguous problem, digest it into clarity, and maintain intent through complex execution. The ones who verify outcomes against actual goals and ship solutions that solve the real problem. They don't wait for permission, don't need someone holding their hand through each step, and don't hand off the judgment calls.

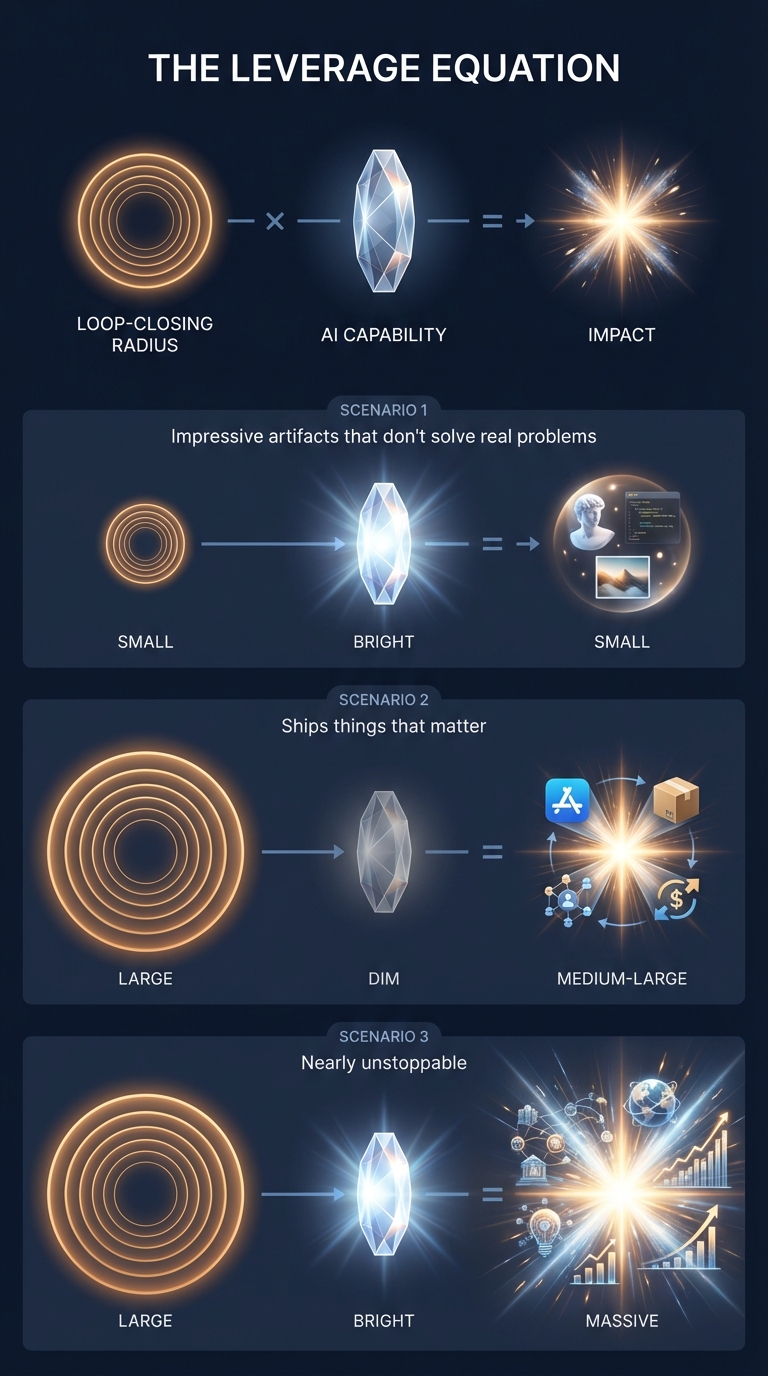

This is the new leverage equation: Loop-closing radius x AI capability = impact.

Someone with a narrow loop-closing radius and excellent AI skills will produce impressive artifacts that don't solve real problems. Someone with a wide loop-closing radius and basic AI skills will ship things that matter. Someone with both will be nearly unstoppable.

Wide radius without good orientation is dangerous, not just ineffective. Someone who can close large loops autonomously but frames the problem wrong will produce enormous, well-executed damage. The leverage equation cuts both ways. Organizations can't simply hand out wider mandates without investing in the judgment that makes those mandates productive.

The Organizational Implication

Organizations are not ready for this.

Most organizations are structured around the assumption that execution is the bottleneck and coordination is the binding constraint. They have elaborate systems for dividing work, coordinating handoffs, reviewing outputs, and integrating contributions. These systems made sense when execution was expensive and coordination was the only way to accomplish complex goals.

But when ever-greater execution zones fit inside a single human mind and toolset, coordination becomes overhead. Every handoff is a place where intent gets lost. Every review is a place where someone who doesn't own the loop makes decisions for someone who does. Every integration point is a place where the original problem gets subordinated to organizational convenience, bureaucratic friction, and "that's not how we do things around here" dysfunction.

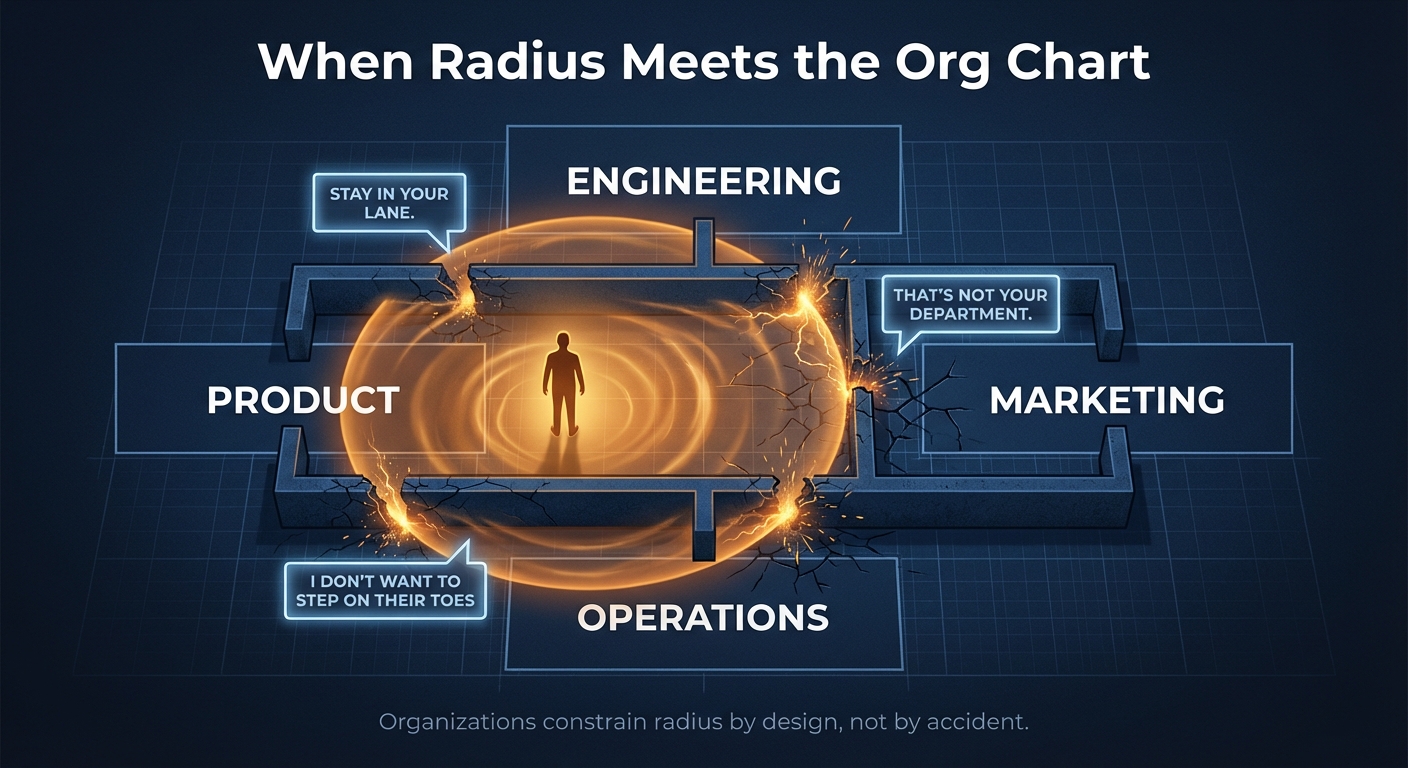

Let's be honest about why this is hard: narrow mandates aren't just an inefficiency to be redesigned. They're power structures. Middle managers whose reports start closing loops that bypass the chain of command don't celebrate the efficiency gain. They feel threatened. Organizations constrain loop-closing radius not by accident but because concentrated decision-making authority is how hierarchies maintain themselves. Any serious effort to widen radii will run into this resistance. You've heard the phrases: "stay in your lane," "I don't want to step on their toes," "that's not your department." All coded cultural values that disable change and prevent individuals from closing bigger loops.

The organizations that figure this out will win. The ones that don't will watch their most capable people leave. Wide-radius people don't need the organization as much as the organization needs them. When the cost of going independent drops (and AI is dropping it fast), the best loop-closers may simply walk out rather than fight the bureaucracy.

The organizations that will retain them are the ones that figure out how to identify, develop, and unleash people with wide loop-closing radii — a transformation that requires strategic intent.

This means:

Evaluating talent differently. Stop asking "are they a barrel?" Start asking "what's their current radius, and how fast is it expanding?" The first question sorts. The second invests.

Cultivating AI Pilots at every level. Organizations need high-agency people throughout: people who pull problems toward themselves rather than waiting for the handoff to arrive neatly packaged, who have the tools and permission to close loops wherever they find them. Build systems that smooth the path for these people rather than forcing them through approval chains designed for a slower era.

Building radius deliberately. This means stretch assignments with real stakes, not training programs with simulated ones. It means feedback that helps people calibrate their self-efficacy accurately, not performance reviews that sort them into bins.

Deploying AI as a radius amplifier. Not as a replacement for human judgment, but as a way to let people close loops they couldn't close alone through effective AI training and tooling. The question isn't "which jobs can AI do?" It's "whose radius does AI expand, and what do we unleash them on?"

The Individual Implication

If you're reading this and thinking about your own loop-closing radius, here's what the research suggests:

Your radius is domain-specific. Don't extrapolate from one domain to another. Ask yourself: in this domain, how large a loop can I close without scaffolding? Where do I need permission, validation, or support that others might not?

Your radius expands through practice, not insight. You can't read your way into a larger radius. You have to close loops you haven't closed before. This means taking on problems slightly larger than you're confident you can solve, and completing them even when it's uncomfortable.

But the first step usually isn't solo mastery. Bandura identified four sources of self-efficacy, not one. Mastery experience is the strongest, but it's rarely where people start in unfamiliar territory. More often, the entry point is vicarious experience, watching someone else close a loop and thinking "I could do that." Or it's verbal persuasion, someone you trust saying "you're ready for this" at the right moment. Or it's simply managing the anxiety enough to try. The solo mastery comes later, once you've built enough evidence to attempt it.

Finish things. Half-closed loops don't build self-efficacy. They erode it. A completed project that's 70% of what you envisioned deposits more evidence of agency than an ambitious one you abandoned at 90%.

Locus of control is a choice. Not entirely. Early experiences shape your default. But you can notice when you're waiting for permission, when you're attributing outcomes to external forces, when you're treating yourself as ammunition rather than agent. And you can choose differently.

Own something specific. Not in the abstract. Pick a domain, a problem, a system. Learn it well enough that you notice when something's wrong before anyone tells you. Invest enough that walking away would cost you something. That's when the radius starts expanding on its own.

I know, I know. None of this is easy. I've spent most of my career expanding my own radius, and it never stops being uncomfortable. You just get better at tolerating the discomfort. Frankly, most of us begin to crave it. It signals growth. Expanding your loop-closing radius means operating at the edge of your capability, in territory where you don't have evidence that your actions will work. It means accepting responsibility for outcomes you can't fully control, and finishing things even when you're not sure they're right.

But the alternative, waiting for someone to load you into a barrel and aim you at a target, is increasingly untenable. The age of ammunition is ending. The age of agency is beginning.

One more thing worth noting: AI is the first tool in human history that evolves faster than human adaptation cycles. You can't master it in the traditional sense. Which is exactly why loop-closing radius matters more than any specific AI skill. The tools will change. The ability to responsibly take a problem from ambiguity to outcome won't.

The good news: agency is a muscle you can grow. Loop-closing radius expands with predictable, intentional practice. All of this is within our own locus of control.

And frankly, the stakes go beyond individual careers. We're deciding right now what kind of world AI builds. Should it be one where a shrinking number of people have agency and everyone else gets sorted into "ammunition" — low-res NPCs in someone else's game? Or one where more people than ever can own meaningful problems and ship meaningful solutions?

Let me know in the comments what you think about these ideas, and what you need to help you grow and expand your ability to solve problems in the age of AI.

Links & Resources

The Source Material

- Keith Rabois, "How to Operate" (Stanford, 2014) - The original barrels vs. ammunition lecture

- Albert Bandura, "Self-Efficacy: Toward a Unifying Theory of Behavioral Change" (1977) - The foundational paper on self-efficacy

- Julian Rotter, "Generalized Expectancies for Internal vs External Control of Reinforcement" (1966) - Locus of control, original paper

- Seligman & Maier, "Learned Helplessness at Fifty: Insights from Neuroscience" (2016) - The inversion: passivity is default, control is learned

- Jon Pierce et al., "Toward a Theory of Psychological Ownership in Organizations" (2001) - Three routes to ownership

Additional Sources

- Tomas Pueyo, "The $100M Worker" (Uncharted Territories, 2026) - Analysis of AI effective compute compounding ~50x per year through hardware, investment, and algorithmic gains

- Lee Gonzales, "The GenAI Tractability Grid" - Framework for mapping which problems AI can solve alone vs. requiring human judgment

Concepts Explained

- OODA Loop - John Boyd's observe-orient-decide-act framework, originally developed for fighter pilots, now widely applied to strategy and decision-making

- Self-efficacy - Your domain-specific belief that your actions can produce desired outcomes. Not "confidence" in general, but conviction in a specific area

- Locus of control - Whether you believe outcomes result from your own actions (internal) or from external forces (external)

- Learned helplessness - Originally: organisms learn passivity from uncontrollable events. Updated: passivity is default, and control is the learned state

Lee Gonzales is the Director of AI Transformation at BetterUp, Founder of Catalyst AI Services, and Differential AI Labs. He writes about AI enablement, human agency, and the organizational transformations both require.

Appendix: Article Spec

Thesis: The divergence in AI-era outcomes isn't about technical skill. It's about loop-closing radius: the size of the problem you can take from ambiguity to verified outcome without external scaffolding.

Angle: Rabois's "barrels vs. ammunition" framework is sticky but flawed: binary, acontextual, passive. Loop-closing radius is continuous, domain-specific, and developmental. It explains the AI capability gap better and offers a path forward.

Framework: OODA loop as the structure of a "loop" (observe, orient, decide, act, verify, close).

Historical anchor: Bandura (self-efficacy, 1977), Rotter (locus of control, 1966), Seligman (learned helplessness, 1967 + 2016 inversion).

Recent anchor: Cate Hall's agency writing (2024), Pew Research on AI and human agency (2023).

Key tension: If loop-closing radius is developmental, why do organizations still sort people into fixed categories? And does AI actually expand radius, or just amplify existing radius while atrophying it for those who delegate judgment?